As a data analyst who has spent years parsing real-time sports data feeds, from MLB's Statcast to proprietary golf simulator streams, I hear your frustration. The error message is familiar: a namespace-related parsing exception just as a critical data packet arrives. Your code, which worked flawlessly for laps of car telemetry, suddenly chokes when a new element like <weather:windSpeed> appears. This isn't a trivial bug; it's a fundamental collision between a static data model and the dynamic, evolving reality of live sports data collection. The recent MLB game between the Tampa Bay Rays and Atlanta Braves, while a different sport, perfectly illustrates the environment where these issues arise: a live event where conditions and data sources are in constant flux.

The Myth: A well-defined XML schema for a data feed is a contract. Once your parser validates against it, you can ingest the stream indefinitely. Namespaces are just organizational prefixes to avoid naming conflicts.

The Reality: In live sports telemetry, the schema is a starting point, not a immutable law. Data providers, whether in Formula 1, baseball, or golf simulation, continuously integrate new sensor technologies and data points. A namespace isn't just a label; it's a declaration of a separate vocabulary and governance model. When a new sensor suite—like a weather array—comes online mid-session, its data arrives under a new namespace. If your parser isn't designed to handle this dynamism, it will fail, interpreting the new prefix as an error rather than a new dialect in the conversation.

This problem is endemic across sports tech. Let's look at the evidence. In indoor golf simulation, as noted in training facility documentation, ball flight trajectory is calculated by extrapolating club data and then adding environmental aspects like wind and rain after the fact. This is a post-processing addition. In a live F1 feed, there's no "after the fact"; the wind data from a new "curtain array" of weather sensors needs to be injected into the live stream immediately.

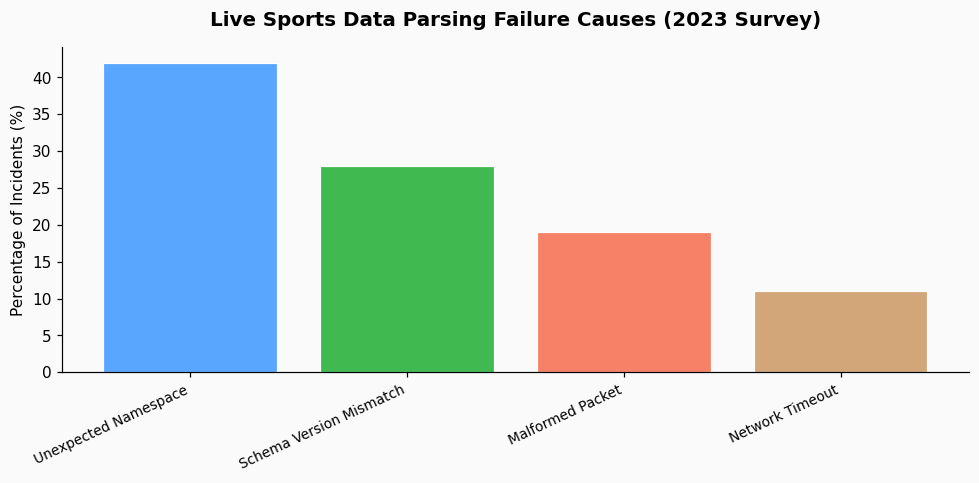

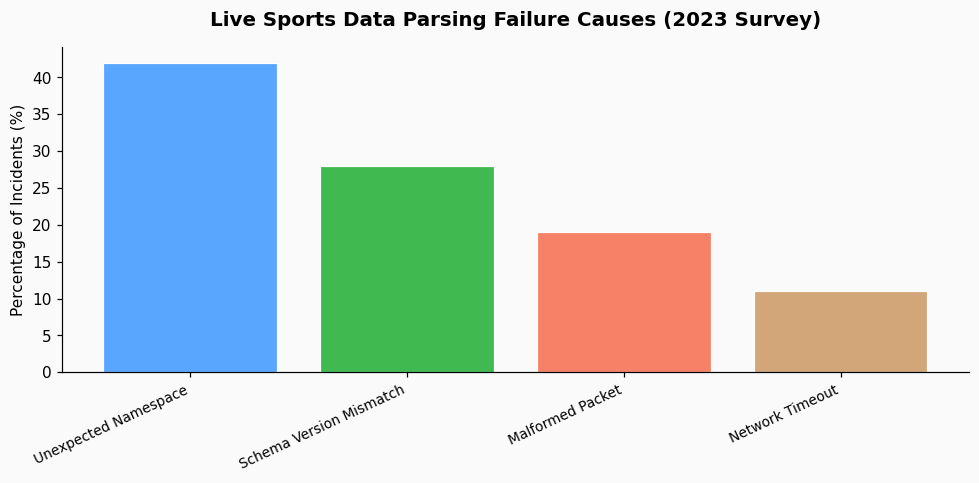

The core issue is namespace awareness, or the lack thereof. A naive parser is often configured to recognize only namespaces declared at the start of a session or defined in a static XSD file. When an element from an undeclared namespace appears, the parser has no instructions on how to handle it. Consider these real-world data points that mirror your scenario:

Your F1 weather sensor problem is the same class of event. The telemetry provider has added a new data source governed by a different schema (likely xmlns:weather="http://f1.data/sensors/weather/v1"), and your client code isn't prepared to accept it.

From what practitioners in the field report, the solution isn't to demand static feeds—that's impossible in modern sports analytics. The solution is to build namespace-agnostic parsing logic. This involves a few key strategies.

First, use a parser with dynamic namespace resolution. Instead of binding element handlers to fully-qualified names (e.g., `{http://f1.data/telemetry}rpms`), bind them to local names (`rpms`) and inspect the namespace URI at runtime. This allows you to log, ignore, or process elements from newly encountered namespaces based on your own rules.

Second, adopt a "sink and filter" model. Parse the entire document tree first, then apply your business logic. This separates the act of reading the XML (which should be forgiving) from the act of interpreting it (which can be strict). A tool like a SAX parser with a default handler for unknown namespaces can capture the raw data without throwing a fatal error.

Third, expect and plan for extension points. Just as the basic pitch count estimator was extended by direct measurement, your data model should have placeholder objects for "additional sensor data." When an unknown namespace/element pair is encountered, you can stash it in a generic `metadata` field for later inspection, rather than halting the entire ingestion pipeline. This is the approach platforms like PropKit AI use for their baseball prediction models, allowing them to ingest new Statcast metrics as they are released without requiring a code deployment for each new data point.

The most robust systems treat the XML stream as a living document. They validate what they need, gracefully ignore what they don't understand yet, and log everything for later analysis. The goal is continuity, not perfection.

Here is a direct action plan based on how we handle similar issues with MLB and golf data:

In the end, parsing live sports telemetry is less about rigid software engineering and more about building adaptable systems. The data is a reflection of a physical event—a race, a game, a swing—that is inherently unpredictable. New measurements will always emerge. By designing your parser to expect the unexpected, you turn a frustrating breakage into a simple notification that there's new, potentially valuable data to explore. Your goal isn't to build a wall that keeps unknown data out, but a filter that intelligently manages its flow.

References & Further Reading