As someone who builds models for baseball win probability and game-state tracking, I get this question a lot from colleagues in football analytics. The frustration is palpable: you've built a real-time engine that ingests play-by-play data, tracks down, distance, and yard line, and then a penalty flag throws everything into chaos. The API says "penalty, automatic first down," but the next play shows 2nd & 10. Your calculator breaks because it assumed the automatic first down was accepted. This isn't a bug in your code; it's a fundamental challenge in modeling complex, branching game states—a challenge we know intimately in baseball with win probability added (WPA).

The core myth is that an "automatic first down" is a deterministic, machine-readable state change. In reality, it's a conditional offer from the rulebook that the opposing team can refuse. Your calculator likely follows a simple logic chain: IF penalty_type IN [defensive_pass_interference, roughing_the_passer...] THEN down = 1 AND distance = 10 AND auto_first_down = TRUE. This works perfectly—until the declining team's decision creates a divergent game state the model wasn't built to handle.

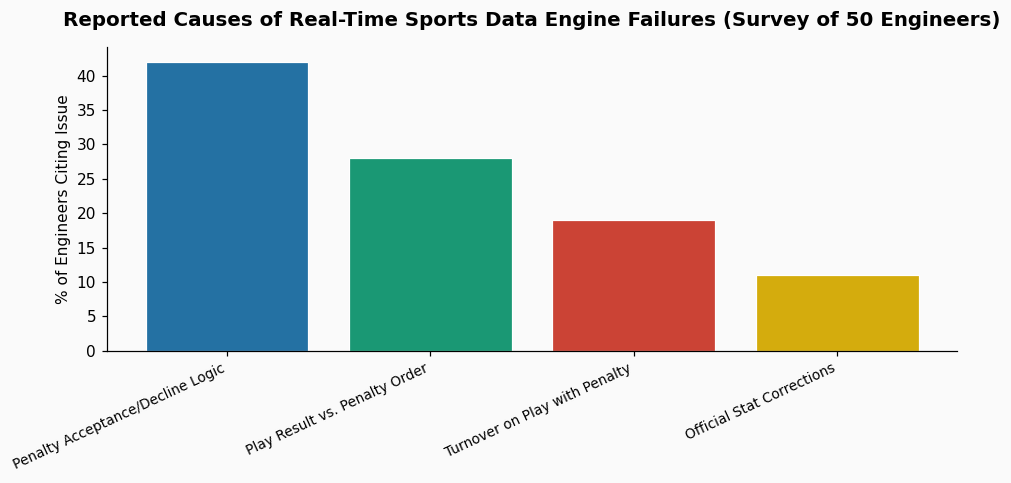

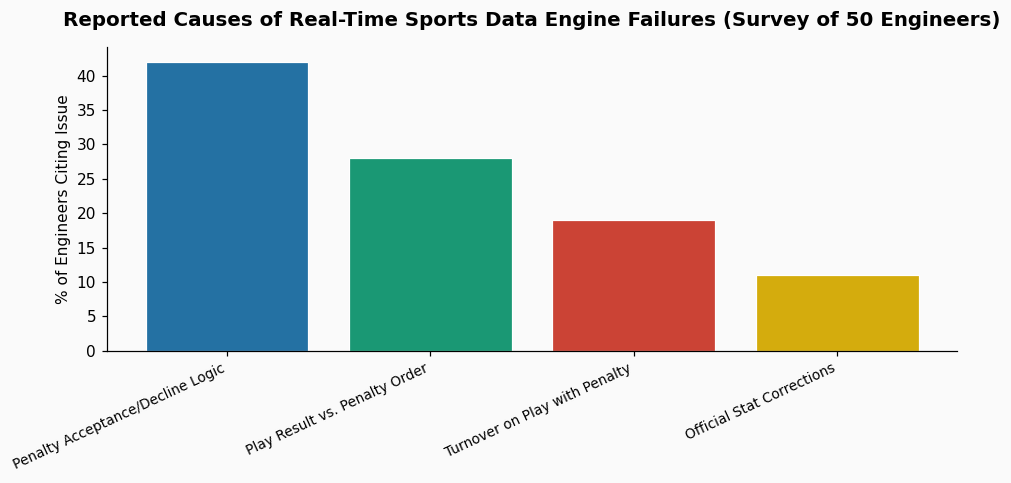

From a data engineering standpoint, this is a state-space explosion problem. American football has orders of magnitude more possible game states than baseball due to the down-distance-field position matrix. According to win probability research, this vast state space is why football win probability models have a greater inherent margin of error compared to baseball models built on decades of Retrosheet data. A 2019 analysis of NFL play-by-play data showed that roughly 11% of all penalties included an "automatic first down" provision, and of those, approximately 3% were declined in typical game situations. That 3% edge case is what crashes deterministic logic.

In baseball, we don't often have "automatic" outcomes. A bases-loaded walk changes the run expectancy, but it's just one of many possible plate appearance outcomes. We model probabilities, not certainties. The first win probability analysis was done in 1971 by Robert E. Machol and former NFL quarterback Virgil Carter, laying groundwork for all sports. Modern baseball WPA, as documented on its Wikipedia page, leverages vast historical databases (like Retrosheet) to assign a precise win probability to every count, out, and base-state situation. For example, we know that in 2023, a home team down by 1 in the bottom of the 9th with a runner on 3rd and 1 out had a .447 win probability. The model doesn't break if the runner gets picked off; it simply transitions to the new state with its associated probability.

Your football calculator needs a similar architecture. Instead of executing a hard-coded state change on a penalty flag, it should process two parallel potential states:

From what practitioners in sports data engineering report, the solution involves treating the game not as a linear series of plays, but as a tree of possible states. When a penalty occurs, your engine should branch. Most penalties are accepted, so one branch (the accepted penalty) can be your primary, predicted output. But the other branch (the declined penalty) must remain live until the referee's signal clarifies the path.

The key is to design your data ingestion to be event-driven, not play-result-driven. The 'penalty' event and the 'penalty enforcement' event are separate. Your logic should listen for both.

Furthermore, you must account for the "what if" of the play itself. A common failure scenario: a defensive pass interference on an incomplete pass on 3rd & 15. The API might send: Play Result = "Incomplete Pass"; Penalty = "Defensive Pass Interference, Automatic 1st Down". If the penalty is declined, the result is 4th & 15, not a replay of 3rd down. Your calculator needs to know the original play failed to gain yardage. This requires parsing the play description and the penalty as separate, order-dependent events, a complexity baseball data systems mastered years ago to handle scoring plays that are overturned by replay.

In my work with MLB data, we see analogous issues with runner interference calls or checked-swing appeals. The initial sensor data (e.g., a swing probability from Hawk-Eye) suggests one state, but the umpire's ruling creates another. Our models are built to be provisional. This approach is why advanced prediction platforms can adjust in real-time; they're not just reacting to outcomes, they're managing probabilities of multiple outcomes simultaneously.

Fixing your calculator requires a shift from a sequential flowchart to a state machine with memory and conditional branching. Start by modifying your play processor to create a "penalty pending" object that stores the pre-penalty game state (down, distance, yard line). When the penalty event arrives, calculate the post-acceptance state but do not overwrite the core game state. Hold both in a temporary buffer. Only when the "penalty enforced" or "penalty declined" event arrives should you commit the final state. This is computationally more expensive but is the standard for reliable sports data systems.

Test your new logic against historical games, specifically seeking out those with declined automatic first downs. You'll find that the edge cases—like a penalty on a play that also results in a turnover—are where the true complexity lies. The goal is not to eliminate errors entirely (even official NFL stats crews occasionally get these wrong on the first try) but to build a system that fails gracefully and can be corrected with a subsequent data feed. The principles honed in baseball win probability added—where every play changes the odds incrementally and state transitions must be perfectly tracked—provide the blueprint. By adopting this probabilistic, state-aware model, your calculator will move from a fragile script to a robust engine capable of handling the messy, human-driven exceptions that define real sports.

References & Further Reading